Researchers see bias in self-driving software

Autonomous vehicle software used to detect pedestrians is unable to correctly identify dark-skinned individuals as often as those who are light-skinned, according to researchers at King’s College in London and Peking University in China.

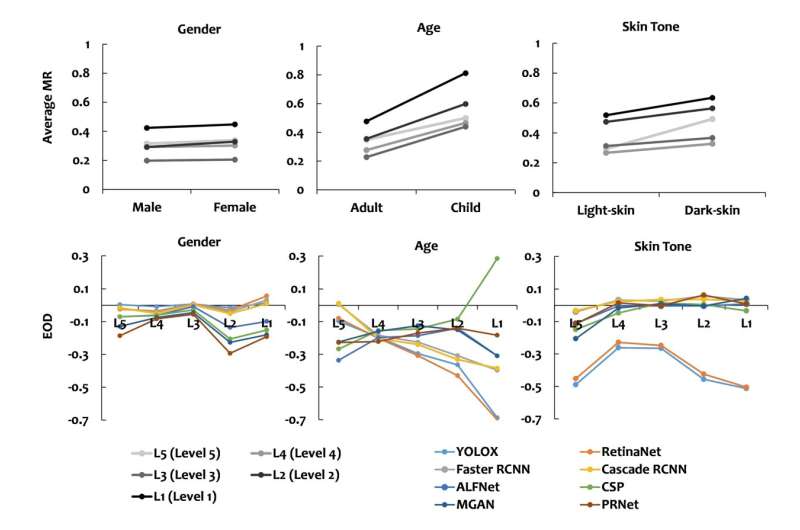

Researcher tested eight AI-based pedestrian detectors utilized by self-driving car manufacturers. They found a 7.5% disparity in accuracy between lighter and darker subjects.

Also troubling was a finding that the ability to detect darker-skin pedestrians is further diminished by lighting conditions on the road.

“Bias towards dark-skin pedestrians increases significantly under scenarios of low contrast and low brightness,” said Jie M. Zhang, one of six researchers who participated in the study.

Incorrect detection rates of dark-skinned pedestrians rose from 7.14% in daytime to 9.86% at night.

Results also showed a higher rate of detection for adults than for children. Adults were correctly identified by the software at a rate 20% higher than for children.

“Fairness when it comes to AI is when an AI system treats privileged and under-privileged groups the same, which is not what is happening when it comes to autonomous vehicles,” said Zhang.

She noted that while sources of auto manufacturers’ AI training data remain confidential, it may be safely assumed that they are built upon same open source systems used by the researchers.

“We can be quite sure that they are running into the same issues of bias,” Zhang said.

Zhang noted that bias—intentional or not—has been a longstanding problem. But when it comes to pedestrian recognition in self-driving cars, the stakes are higher.

“While the impact of unfair AI systems has already been well documented, from AI recruitment software favoring male applicants, to facial recognition software being less accurate for black women than white men, the danger that self-driving cars can pose is acute,” Zhang said.

“Before, minority individuals may have been denied vital services. Now they might face severe injury.”

The paper, “Dark-Skin Individuals Are at More Risk on the Street: Unmasking Fairness Issues of Autonomous Driving Systems,” was published Aug. 5 on the preprint server arXiv.

Zhang called for the establishment of guidelines and laws to ensure AI data is being implemented in a non-biased fashion.

“Automotive manufacturers and the government need to come together to build regulation that ensures that the safety of these systems can be measured objectively, especially when it comes to fairness,” said Zhang. “Current provision for fairness in these systems is limited, which can have a major impact not only on future systems, but directly on pedestrian safety.

“As AI becomes more and more integrated into our daily lives, from the types of cars we ride, to the way we interact with law enforcement, this issue of fairness will only grow in importance,” Zhang said.

These findings follow numerous reports of bias inherent in large language models. Incidents of stereotyping, cultural insensitivity and misrepresentation have been observed since ChatGPT opened to the public late last year.

OpenAI CEO Sam Altman has acknowledged problems.

“We know ChatGPT has shortcomings around bias, and are working to improve it,” he said in a post last February.

More information:

Xinyue Li et al, Dark-Skin Individuals Are at More Risk on the Street: Unmasking Fairness Issues of Autonomous Driving Systems, arXiv (2023). DOI: 10.48550/arxiv.2308.02935

arXiv

© 2023 Science X Network

Citation:

Researchers see bias in self-driving software (2023, August 23)

retrieved 24 August 2023

from https://techxplore.com/news/2023-08-bias-self-driving-software.html

This document is subject to copyright. Apart from any fair dealing for the purpose of private study or research, no

part may be reproduced without the written permission. The content is provided for information purposes only.

Comments are closed